Arjun Krishna

7+ years of software work experience with domain expertise in LLM foundation model training and inference, distributed cloud infrastructure for ML and big data pipelines. Demonstrated experience in technical leadership, leading cross-organizational initiatives, establishing technical standards and best practices.

Download my resume hereSkills

- Python

- C++

- Java

- JavaScript

- TypeScript

- C

- Objective C

- MATLAB

- Ruby

- PyTorch

- TensorFlow

- Transformers

- JAX

- NeMo

- DeepSpeed

- PEFT

- vLLM

- SGLang

- TensorRT-LLM

- LangChain

- Keras

- OpenCV

- SparkML

- CUDA

- Scikit-learn

- XGBoost

- NumPy

- Pandas

- Ray

- NCCL

- Flask

- React

- NodeJS

- Kubernetes

- Slurm

- Hadoop

- Spark

- Hive

- Flink

- MySQL

- DynamoDB

- PostgreSQL

- MongoDB

- Apache RDD

- Indexed DB

- Cassandra

- Pinecone

- HuggingFace

- Git

- Perforce

- Docker

- OpenRouter

- GitHub Copilot

- Cursor

- Claude Code

- VSCode

- IntelliJ

- VisualStudio

- PyCharm

- NGINX

- AWS

- Linux

- Mac

- Windows

- Android

- XCode

- Apple

- Machine Learning

- Big Data Analysis

- AWS (NAWS) Cloud Development

- Cloud Deployment Infrastructure

- Test Automation Infrastructure

- Database Internals

- Data Analysis

- Storage Scalability

- System Design

- Computer Vision

- Natural Language Processing

- Image Processing

- Color Management

- Web Development

- Android Development

Creations

A collection of projects authored by Arjun, and likely shared out with the community as an open source project.

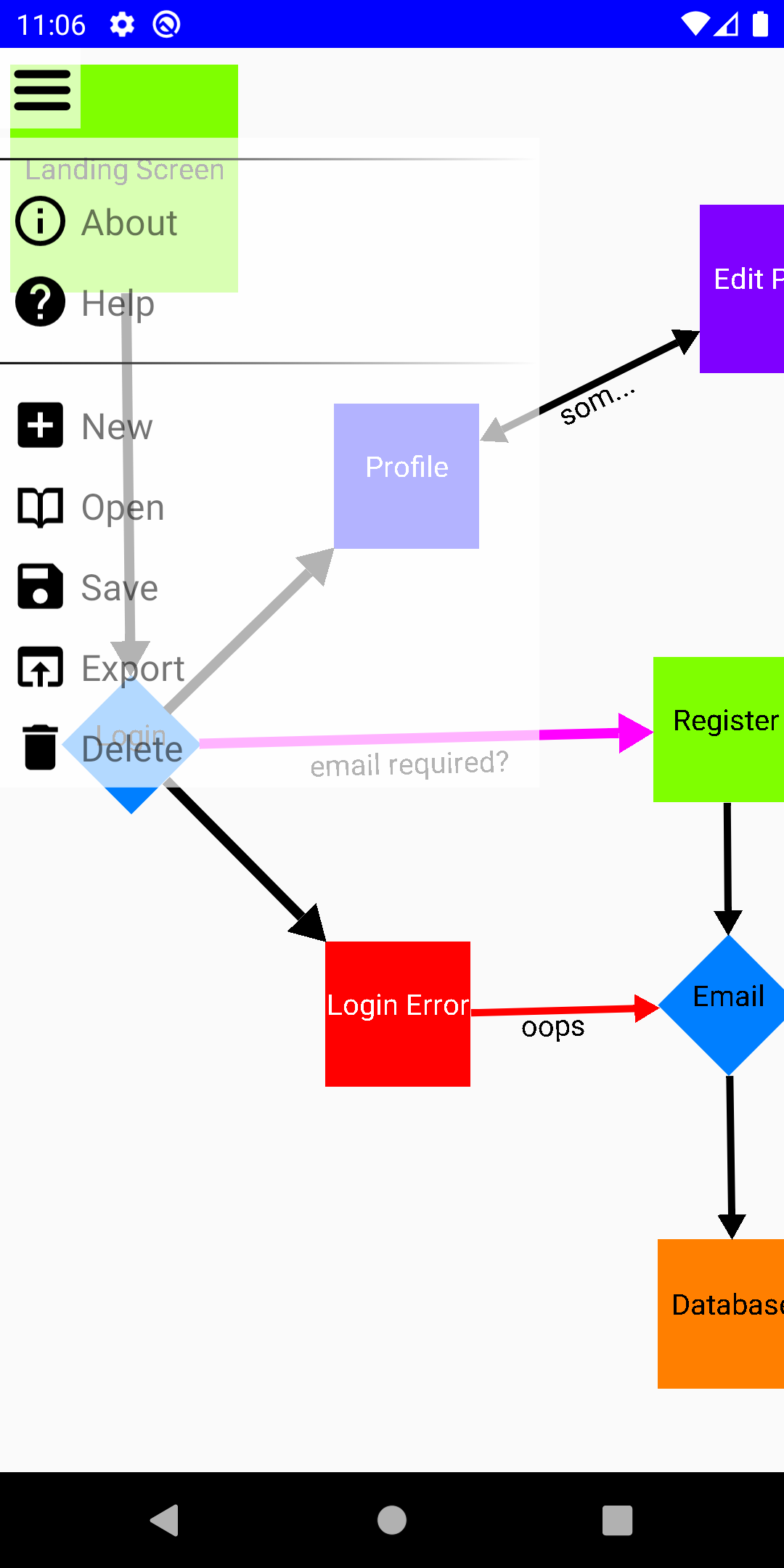

Express flowcharts android app

Express Wiki

Express Wiki gives you a personal online wikipidea powered by AI so you can read and organize your wikipidea.

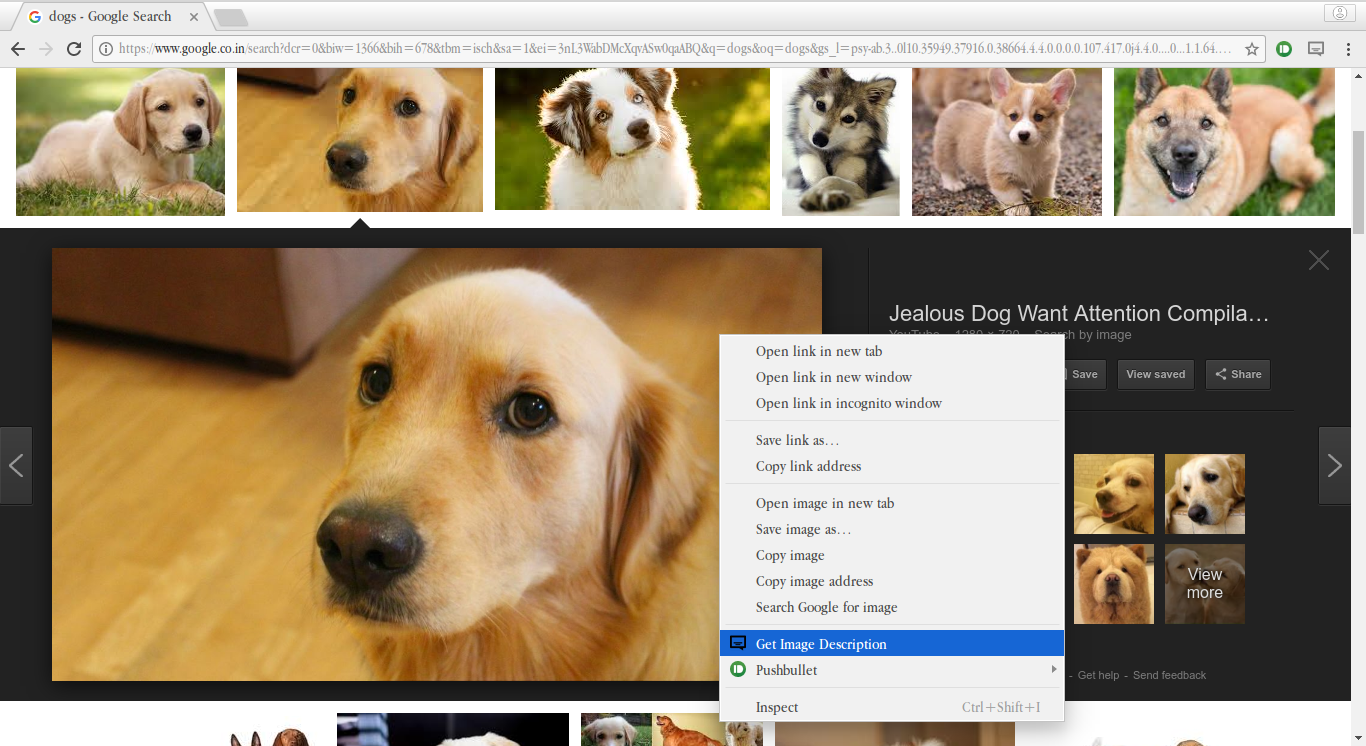

Google chrome extension to caption images using RCNN

Google Chrome Extension that generates a caption for an image using deep learning network RCNN

Open Source Contributions

A collection of efforts to which I contributed, but did not create. Contributing back to Open Source projects is a strong passion of mine, and requires a considerate approach to learn norms, standards and approach for each community for a successful merge!

Cyclical Learning Rate (CLR)

Worked and experimented with CLR algorithm which is implemented in Keras that uses cyclical learning rates to train deep neural networks.

Text detection in natural scenes

Achievements

AWS Aurora hackathon

Won Aurora hackathon 2021 for developing novel prefetch algorithm for data read ahead for Aurora MySQL 8.0 engine.

Kudos award Adobe

Won prestigious kudos award 2020 for my contributions at CoreTech Adobe among team of 100+ builders.

Winner of Adobe shark tank

Winner Adobe shark tank event for best product idea pitch amongst 100+ new hires.

Best B.Tech. project award

Best B.Tech. Project Award received from director IIT Roorkee amongst 1816 students.

KVPY national scholarship fellow

Secured All India Rank of 401 among ~1,50,000 participants for Kishore Vaigyanik Protsahan Yojana

District topper of All India Senior School Certificate Examination 2015

All India Senior School Certificate Examination 2015 certificate received from education minister India.

Cleared First Stage of CODEFUNDO

Cleared First Level of International Chemistry Olympiad

Publications

Thesis - Video segment copy detection

Experience

Senior Machine Learning Engineer

Led cross-organizational effort across engineering, research, and infosec teams to architect and launch Palmyra self-evolving models that surpass frozen LLM perf ceiling on enterprise agentic workflows by 23% through continuous learning from model interactions. Led team of 7 engineers to develop Palmyra X5, achieving GPT 4.1 level perf at 4X lower cost and state-of-the-art latency (22s at 1M tokens).

Senior Software Development Engineer

Led development and launch for SageMaker HyperPod Distributed Training Recipes, comprising a team of 10, including senior ICs. Drove cross-team collaborations and integrations. These open-source recipes (80+ stars in GitHub) automate training by loading datasets, applying distributed training techniques, and managing checkpoints. Lead for SageMaker Distributed Model Parallel library, delivering up to 20% perf gains over PyTorch FSDP for large-scale LLM training. Improved parallelism of LLMs like Llama, Qwen & GLM by leading team of 5 ICs.

Software Development Engineer

Developed machine learning ecosystem infrastructure Aurora ZeroETL and Bedrock and SageMaker integrations for Aurora, enabling native ML workflows for Aurora customers. Developed a novel prefetch algorithm for data read-ahead by incorporating clustering algorithms based on data access patterns, increasing SQL read throughput by 30%.

Software Development Engineer

Developed Genshop, Adobe's core deep learning library for generative models, adopted across multiple products. Implemented CPU and GPU workflows with CUDA primitives for Adobe color, graphics & font engine.

Research Intern

Built a personalized document-highlighting system that used prior reader interactions to surface the most relevant content in new documents. Incorporated information retrieval and reinforcement learning techniques for keyword extraction.

Research Assistant

Researched on action recognition with feature fusion which involved combining traditional computer vision techniques with deep learning to improve the accuracy of action recognition by 15%.